The Ultimate 2026 Technical SEO & Site Performance Guide

Powerful and highly optimized content cannot work alone in 2026. A comprehensive Technical SEO Audit is the foundation of visibility, because even the best content can’t rank if search engines can’t crawl your site. Google has made it very clear that the signals of trust that rely on crawlability, indexability, canonicality, and proper submission and processing of files such as the XML Sitemaps and Robots.txt are the basis of rank in search.

This is where the Technical SEO Audit assists in testing the health of the site in all aspects, whether search engines can easily crawl your site, it is safe to index, and the load speed is not too slow to affect rankings and conversions.The creation of a brand may Require several months, and even after months of hard work, the performance may be affected by

Crawling errors, waste of redirects, HTTPS glitches, and conflict of canonical tags. A clean technical structure that allows search

engines to navigate your pages without losing track is needed to rank well.

Why Technical SEO Audit Matters More in 2026

A smart Technical SEO Audit does not simply involve identifying broken pages. It reveals where your site is losing authority, wasting crawl budget, and confusing search engines. This would translate in real life to the following questions:

- Can Google actually find your most important pages?

- Are duplicate pages properly tagged to avoid confusing search engines?

- Is your sitemap clean and easy for bots to follow?

- Are your redirects helping users or just slowing the site down?

- Is every single page on your site secure with HTTPS?

The guidance provided by Google proves that crawler access is governed using Robots.txt, yet it does not exclude a page from Google itself. To be non-indexed, site owners require no index management or access controls. That single misunderstanding causes a lot of indexing chaos on real sites.

The Core Areas Every Technical SEO Audit Should Cover

This is the easiest way to conceptualize Technical SEO in 2026: search engines need to be capable of finding your pages, crawling them with minimal friction, knowing which URLs are important, and being confident that your site is secure and reliable. The following is where most sites either win quietly or fail quietly.

| Audit Area | What to Check | Why It Matters |

| Crawl Access | Robots.txt, blocked resources, accidental disallows | Prevents search engines from being blocked from important pages |

| Index Signals | meta robots, no index, canonicals | Stops mixed signals and duplicate indexing |

| URL Health | 404 Redirects, broken links, status codes | Preserves user experience and link equity |

| Sitemap Quality | XML Sitemaps with clean canonical URLs | Helps discover and surface errors faster |

| Security | HTTPS consistency | Builds trust and avoids split-indexing versions |

| Search Console Monitoring | GSC, page indexing, sitemap reports | Shows how Google is actually processing the site |

How to Perform a Technical SEO Audit without Overcomplicating It

A good audit is structured. A bad audit is just a giant exported spreadsheet nobody fixes.

1. Start with Crawl Access and Indexability

Review Robots.txt first. Your robots.txt file lives at yourdomain.com/robots.txt. Carefully set up blocklists and ensure you do not block important folders, JavaScript, CSS, product pages, or blog paths. Google clearly mentions that Robots.txt is primarily used to control crawling as opposed to withholding search results.

Then review robot meta-directives. Pages marked with no index, pages blocked from crawling, and pages with conflicting instructions are common reasons visibility drops after migrations or CMS changes. Google’s robot meta-documentation explains how these directives affect crawling and presentation. Your robots.txt file lives at yourdomain.com/robots.txt. It is publicly visible, anyone can read it. This means you should never use it to hide sensitive pages (it does not work that way).

2. Validate your XML Sitemaps

Your XML Sitemaps should only include live, canonical, index-worthy URLs. Your sitemap must reside at yourdomain.com/sitemap.xml and be added to GSC. As recommended by Google, sitemaps are supposed to be easily accessible and submitted through the Search Console, where any processing errors can also be observed.

Having redirected URLs, non-canonical pages, junk of parameters, and indexed pages in your sitemap, you are basically giving search engines a slipshod roadmap

3. Crawling Errors: How to Fix Website-Crawling Issues

If you want to know how to fix website-crawling issues, stop treating them as minor warnings. The Crawling Errors can reveal more structural issues: blank pages, redirect loops, blocked resources, or bad internal linking. Bing and Google are both dependent on available site architecture to find and index content.

This is where GSC becomes essential in Technical SEO Audit. Use it to review page indexing reports, submitted sitemaps, and URL-level issues. Search Console is not perfect, but it tells you how Google is actually seeing the site, which matters more than your assumptions. Google’s sitemap and crawling documentation directly point site owners to Search Console reporting for this purpose

| Error Type | What It Means | Priority | How to Fix It |

| 404 Not Found | Page doesn’t exist — user or crawler hits a dead end | High | Set up a 301 redirect to the most relevant existing page |

| Redirect Chains | Page A → B → C — too many hops slow crawl | Medium | Collapse to a single direct 301 redirect |

| Duplicate Content | Same content on multiple URLs | High | Add canonical tags pointing to the preferred URL |

| Soft 404 | Empty page returning a 200 OK status | Medium | Add real content or return a proper 404 / 410 response |

| Blocked by robots.txt | Crawler told not to access the page | High | Review robots.txt; remove incorrect disallow rules |

| Sitemap Errors | Crawler told not to access the page | Medium | Regenerate sitemap and resubmit via Google Search Console |

Pro Tip:

Use the URL Inspection Tool inside Google Search Console to test any individual page. It shows you exactly what Google sees — rendered HTML, index status, last crawl date, and any issues detected. Run it before and after any fix to confirm it worked.

4. Clean up Canonicals and Duplicate Signals

The canonical setup is one of the most ignored parts of a Complete Technical SEO Audit Guide 2026. Google explains that canonical signals help consolidate duplicate or highly similar URLs, but mixed signals can weaken that preference.

Check for:

- self-referencing canonicals on primary pages

- duplicate pages with the wrong canonical target

- pagination or filtered URLs competing with core landing pages

- HTTP pages are canonicalizing to HTTPS inconsistently

This is not a cosmetic fix. It affects what gets indexed and what gets ignored.

5. Review 404 Redirects and Redirect Logic

Every serious Technical SEO Audit should map broken pages and redirect behavior.

A clean 404 is perfectly fine when a page is genuinely gone. But when a high-value page gets deleted, one that had backlinks, traffic, or real authority behind it, leaving it as a dead end is a mistake. That link equity does not vanish instantly. It just sits there, wasting away. The smart move is sending it to the nearest relevant page using a 301 redirect, not dumping it on the homepage. That is lazy SEO, and Google knows the difference.

When troubleshooting website crawling issues, start with the basics. Fix broken internal links first. Then look at your redirect chains if URL A sends to B, which sends to C, that is a problem. Every extra hop costs crawl efficiency and bleeds link equity along the way. Old URLs need to be resolved cleanly, both for users and for Googlebot.

A 301 redirect is the right tool here because it communicates something specific: this move is permanent. Google takes that signal seriously. It then transfers the ranking power, the link equity, and the old URL built up over time to the new destination. That matters more than most people realize. Every backlink pointing to a dead page once had value. A 301 keeps that value alive and working for you.

The result? Your site authority stays intact. Your crawl stays efficient. And your SEO maintenance does not quietly fall apart every time a URL changes.

6. Enforce HTTPS Everywhere

Google’s documentation continues to treat HTTPS as an important site-quality signal, and in real-world SEO, it is also a trust signal for users.

Check that:

- HTTP versions redirect cleanly to HTTPS

- internal links point to HTTPS URLs

- canonicals reference HTTPS

- sitemap URLs are HTTPS

- there are no mixed-content issues

If your site still behaves like it has two versions, your SEO foundation is weaker than you think.

7. Core Web Vitals in 2026

Since Google made Core Web Vitals an official ranking signal, there has been a lot of noise about them. Let’s cut through it.

Three metrics matter. Here is what each one measures and what a good score looks like:

| Metric | What It Measures | Good Score | Quick Fix |

| LCP — Largest Contentful Paint | How fast does the main content of the page load | Under 2.5 seconds | Compress images, use a CDN, and preload key assets |

| INP — Interaction to Next Paint | How quickly the page responds when you click or type | Under 200ms | Reduce JavaScript, defer non-critical scripts |

| CLS — Cumulative Layout Shift | Whether the page jumps around while loading | Under 0.1 | Set width and height on all images and embeds |

Heads up:

INP replaced FID (First Input Delay) as an official Core Web Vitals metric in March 2024. If your reports or auditing tools still reference FID as a primary signal, that data is outdated. Update your benchmarks.

SEO Maintenance — Treat It Like a System, Not a One-Time Job

Here is where most businesses drop the ball. They do a technical SEO audit, fix everything on the list, and then go back to business as usual. Six months later, they are dealing with the same issues — or worse ones. Websites are living things. Content gets added, pages break, redirects pile up, and new crawl errors appear.

SEO maintenance means treating your site’s health the way you treat your car. You do not just fix it when it breaks down. You service it regularly to prevent breakdowns from happening in the first place.

Here is a simple rhythm that works:

- Weekly: Monitor GSC and any new crawl errors and coverage losses. Create email notifications to make sure you get to find out about issues before your ranks do.

- Monthly: Run a full site crawl. Check on new broken links, redirect chains, and any thin content or orphaned pages since your last visit.

- Quarterly: Review Core Web Vitals across your most important page types. Check your sitemap accuracy and robots.txt rules, and when you have done any redesign or migration of your site.

What a High-Performing Site Looks Like in 2026

A strong site in 2026 is not just “fast enough.” It is technically predictable.

That means:

- search engines can crawl important pages without friction

- Site Health stays stable after updates and migrations

- Crawlability is not weakened by sloppy templates or plugin conflicts

- SEO Maintenance is ongoing, not reactive

The businesses that win are not always the ones publishing the most. They are often the ones with the cleanest technical systems.

The Bottom Line: Don’t Let a Broken Engine Kill Your Best Content

At the end of the day, Technical SEO is not about chasing every algorithm update.

It never was. It is about one thing: making sure nothing blocks your audience from finding you.

You can spend months writing great content. You can build a beautiful landing page. But if the technical side of your site is broken, none of that work pays off. It just sits there. Unseen.

A Technical SEO Audit is how you clear the path. You fix the crawl errors. You clean up messy redirects. Then lock down your HTTPS security. Each of those fixes quietly builds trust with search engines. Not through tricks. Just through consistency and a site that actually works the way it should.

Most people only open the hood when something stops moving. By that point, the rankings have already slipped, and the recovery takes twice as long. Do not be that business. The ones holding strongly in 2026 are not doing anything magical, they just stayed on top of the basics while everyone else ignored them.

SEO maintenance works the same way a good habit does. You do not notice it when it is working. You only notice when it stops.

It is an ongoing habit. Treat your site health like a system, not a to-do list item you check off once a year.

Build it clean. Keep it clean. Then your content finally gets the chance it deserves.

Let’s Get Your Site Back on Track!

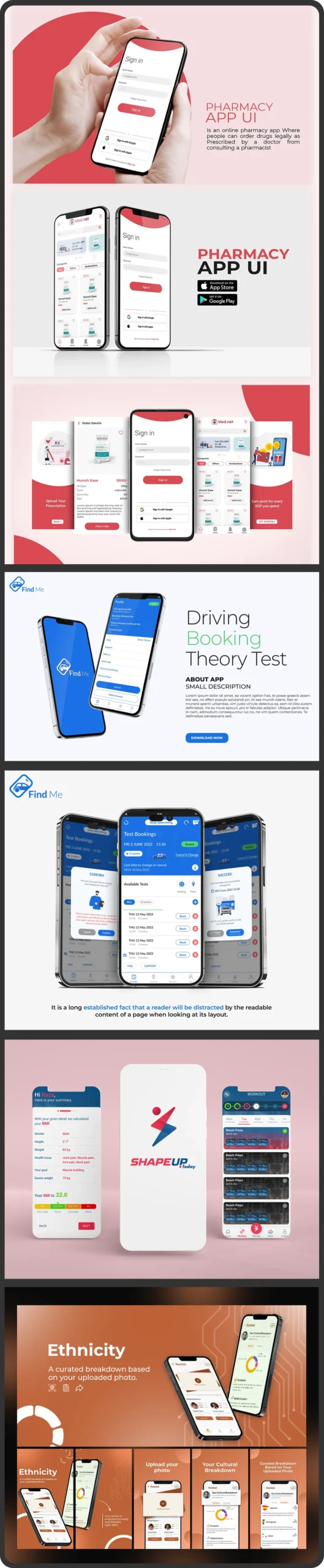

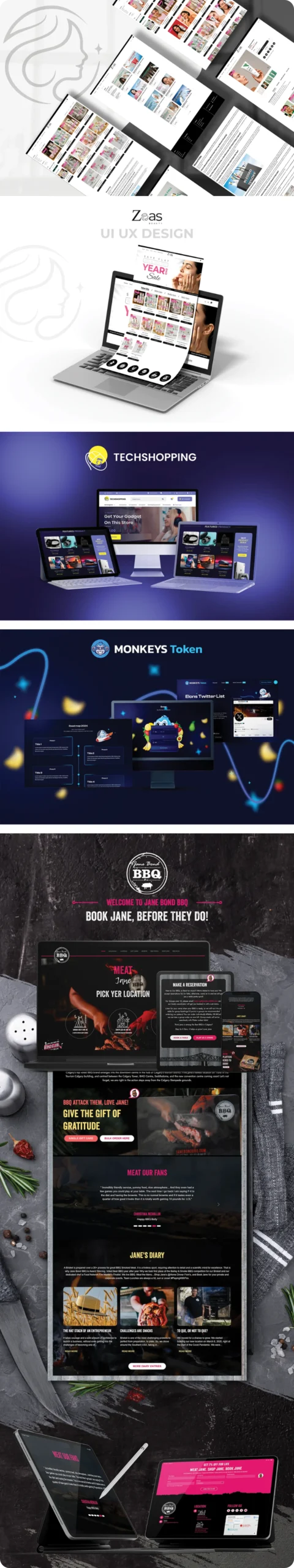

Stop guessing why your rankings are stuck and let The Design Log handle the complete Technical SEO Audit with a full technical deep-dive. We’ll find the hidden errors holding you back and tune your site for maximum speed and visibility. Contact us today to get your Audit and technical fixes done.

Frequently Asked Questions

How frequently do I need to do a technical SEO audit?

A comprehensive technical audit of the SEO should occur at least quarterly on most websites. Monthly crawls are wiser when your site is actively publishing content, running paid ads or when you have undergone any development changes. When you have thousands of product pages on a big e-commerce site, continuous automated monitoring should be considered, such as ContentKing or Sitebulb, which can notify you about problems in close real-time, as opposed to being notified when you next plan to run your audit.

What happens to be the difference between crawling and indexing errors?

They are the same sound, but they are two different issues. Crawling error: This is when Googlebot was unable to even access the page, typically due to a server problem, a DNS problem, or a robots.txt block. An indexing error occurs when the page is visited by Google but will not be included in search results, frequently due to no index tag, thin content, or a canonical tag that points to a different page. They both appear in GSC but require entirely different fixes. The issue of crawling is an access problem. Indexing is a quality or directive issue.

Do redirects (301) lose SEO?

This is among the most prevalent SEO myths. Google has verified that 301 redirects transfer a large proportion of PageRank – or link equity – to the redirected destination URL. The notion that redirects lead to a grave SEO penalty has expired. With that said, redirect chains (when a URL redirects to another, which redirects to another, etc.) are slower to crawl, and may dilute the equity transferred through them a little. Always strive to get to the last destination in one step.